(Main Keyword: dockerfile best practices)

Introduction: Why Writing a Good Dockerfile Matters More Than You Think

Containers have transformed modern software delivery. Whether you're deploying microservices, APIs, machine learning models, or full-stack applications, Docker plays a central role in DevOps workflows. But here's the truth many teams learn the hard way:

A poorly written Dockerfile can lead to: - Bloated images - Security vulnerabilities - Slow build times - Deployment instability - Production failures

That's why understanding dockerfile best practices is essential for every DevOps engineer.

A Dockerfile is not just a build script---it's the foundation of your containerized application. The way you structure it affects performance, scalability, cost, and security.

In this comprehensive guide, you'll learn:

- Why Dockerfile optimization matters

- Step-by-step best practices

- Security improvements

- Performance tuning techniques

- Real-world examples

- Common mistakes to avoid

- Advanced DevOps container strategies

Let's dive in.

What Is a Dockerfile?

A Dockerfile is a text file containing instructions to build a Docker image. These instructions define:

- Base image

- Dependencies

- Environment variables

- Application files

- Startup commands

When you run:

docker build

Docker reads your Dockerfile and creates an image layer by layer.

Following dockerfile best practices ensures your image is lightweight, secure, and efficient.

Why Dockerfile Optimization Is Critical in DevOps

In DevOps environments:

- Containers are deployed frequently

- Images are stored in registries

- Applications scale dynamically

- Security is continuously monitored

An inefficient Dockerfile impacts:

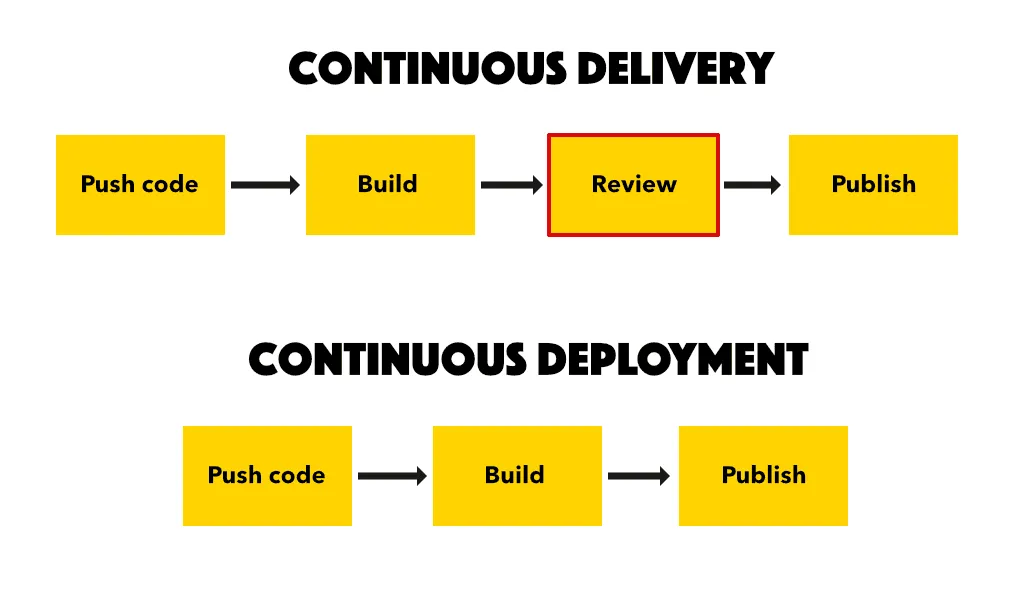

- CI CD build speed

- Cloud storage costs

- Deployment reliability

- Security posture

Optimizing Dockerfiles improves the entire DevOps pipeline.

Core Dockerfile Best Practices

Let's explore practical and actionable dockerfile best practices every engineer should follow.

1. Use Official and Minimal Base Images

Always start with trusted base images.

Instead of:

FROM ubuntu

Prefer:

FROM python:3.10-slim

Why?

- Smaller image size

- Reduced attack surface

- Faster build times

Minimal images improve performance and security simultaneously.

2. Use Specific Image Versions Avoid Latest

Avoid:

FROM node:latest

Use:

FROM node:18.17.1

Why?

- Ensures consistency

- Prevents unexpected breaking changes

- Improves reproducibility

Version pinning is one of the most important dockerfile best practices.

3. Minimize the Number of Layers

Each Docker instruction creates a new layer.

Instead of:

RUN apt-get update RUN apt-get install -y curl

Combine them:

RUN apt-get update && apt-get install -y curl

Benefits:

- Smaller image size

- Cleaner build process

- Faster builds

4. Leverage Multi-Stage Builds

Multi-stage builds separate:

- Build environment

- Production runtime

Example:

FROM node:18 AS build WORKDIR app COPY package.json . RUN npm install COPY . . RUN npm run build

FROM node:18-slim WORKDIR app COPY --from=build appdist dist CMD ["node", "distindex.js"]

Why This Is Powerful:

- Removes unnecessary build tools

- Reduces final image size

- Improves security

Multi-stage builds are essential dockerfile best practices in production environments.

5. Use dockerignore File

Exclude unnecessary files:

- node_modules

- git

- Logs

- Test files

Example dockerignore:

node_modules git *.log

This reduces build context size and speeds up image creation.

6. Avoid Running as Root User

By default, containers run as root.

This is risky.

Add a non-root user:

RUN useradd -m appuser USER appuser

Why?

- Reduces security vulnerabilities

- Follows least privilege principle

Security-focused dockerfile best practices always avoid root execution.

7. Optimize Layer Caching

Order instructions properly.

Incorrect order:

COPY . . RUN npm install

Correct order:

COPY package.json . RUN npm install COPY . .

This ensures dependencies are cached unless package.json changes.

8. Clean Up Unnecessary Files

After installing packages:

RUN apt-get update && apt-get install -y curl && apt-get clean && rm -rf varlibaptlists

Cleaning reduces image size significantly.

9. Use Environment Variables Properly

Define runtime variables:

ENV APP_ENV=production

Avoid hardcoding secrets inside Dockerfiles.

10. Use Health Checks

Add health monitoring:

HEALTHCHECK CMD curl --fail http:localhost:3000 || exit 1

This improves reliability in orchestrated environments like Kubernetes.

Security-Focused Dockerfile Best Practices

Security is non-negotiable in DevOps.

1. Scan Images for Vulnerabilities

Use tools like:

- Trivy

- Docker Scout

- Snyk

Integrate scanning into CI CD pipelines.

2. Reduce Attack Surface

Avoid unnecessary packages.

Install only what your application needs.

3. Avoid Hardcoded Secrets

Never include:

- API keys

- Database passwords

- Access tokens

Use environment variables or secret managers.

Performance Optimization Tips

Containers must be lightweight and efficient.

1. Use Alpine Images Carefully

Alpine images are smaller, but sometimes:

- Cause compatibility issues

- Increase build complexity

Use only when appropriate.

2. Use Layer Caching in CI CD

Cache dependencies between builds to reduce build time.

3. Compress Static Assets

If serving web apps:

- Minify JavaScript

- Compress CSS

- Optimize images

Smaller container size improves startup time.

Real-World Example

A DevOps team deploying a Node.js app faced:

- 1.2 GB image size

- Slow CI CD builds

- Security warnings

After applying dockerfile best practices:

- Image size reduced to 280 MB

- Build time decreased by 40 percent

- Security vulnerabilities reduced significantly

Optimizing Dockerfiles directly improved DevOps efficiency.

Common Dockerfile Mistakes to Avoid

Avoid these errors:

- Using latest tag

- Running containers as root

- Installing unnecessary packages

- Ignoring multi-stage builds

- Copying entire project without filtering

- Not cleaning temporary files

Small mistakes compound into major operational issues.

Advanced Strategies for DevOps Engineers

For production-grade environments:

1. Use Immutable Infrastructure

Rebuild containers instead of modifying running ones.

2. Integrate Dockerfile Linting

Use Hadolint to enforce best practices.

3. Automate Image Updates

Monitor base image updates and rebuild automatically.

4. Combine Docker with Kubernetes Best Practices

Use:

- Resource limits

- Liveness probes

- Rolling updates

Dockerfile optimization supports orchestration success.

Short Summary

Key dockerfile best practices include:

- Use minimal official base images

- Avoid latest tags

- Implement multi-stage builds

- Reduce image layers

- Avoid root user

- Clean up unnecessary files

- Use dockerignore

- Scan for vulnerabilities

Following these practices ensures:

- Smaller images

- Faster builds

- Improved security

- Reliable deployments

Conclusion: Build Smarter Containers for DevOps Success

Dockerfiles are not just configuration files---they're engineering decisions.

When you apply proper dockerfile best practices, you:

- Improve CI CD performance

- Strengthen security posture

- Reduce cloud costs

- Enhance system reliability

DevOps excellence requires attention to detail at every level---including your Dockerfile.

Write clean, secure, optimized Dockerfiles. Your future deployments will thank you.

FAQs Schema-Friendly

What are dockerfile best practices?

Dockerfile best practices include using minimal base images, reducing layers, implementing multi-stage builds, avoiding root user, and optimizing caching.

Why should I avoid using latest tag?

Using latest can cause unexpected changes in builds. Version pinning ensures reproducibility and stability.

How do multi-stage builds improve Docker images?

They separate build dependencies from runtime, resulting in smaller and more secure final images.

Should Docker containers run as root?

No. Running as root increases security risks. Always use a non-root user in production containers.

How can I reduce Docker image size?

Use slim base images, combine layers, remove unnecessary files, and implement multi-stage builds.