Test Case Review Process: Building a High-Fidelity Validation Engine (2026)

In the high-velocity engineering cultures of 2026, the mantra "Test everything" has been replaced by "Test exactly what matters." As organizations scale, the bottleneck is no longer writing tests—thanks to AI-augmented authoring—but ensuring that those tests are accurate, efficient, and maintainable. A test suite full of redundant, fragile, or logically flawed cases is a massive liability that drains developer productivity and provides a false sense of security.

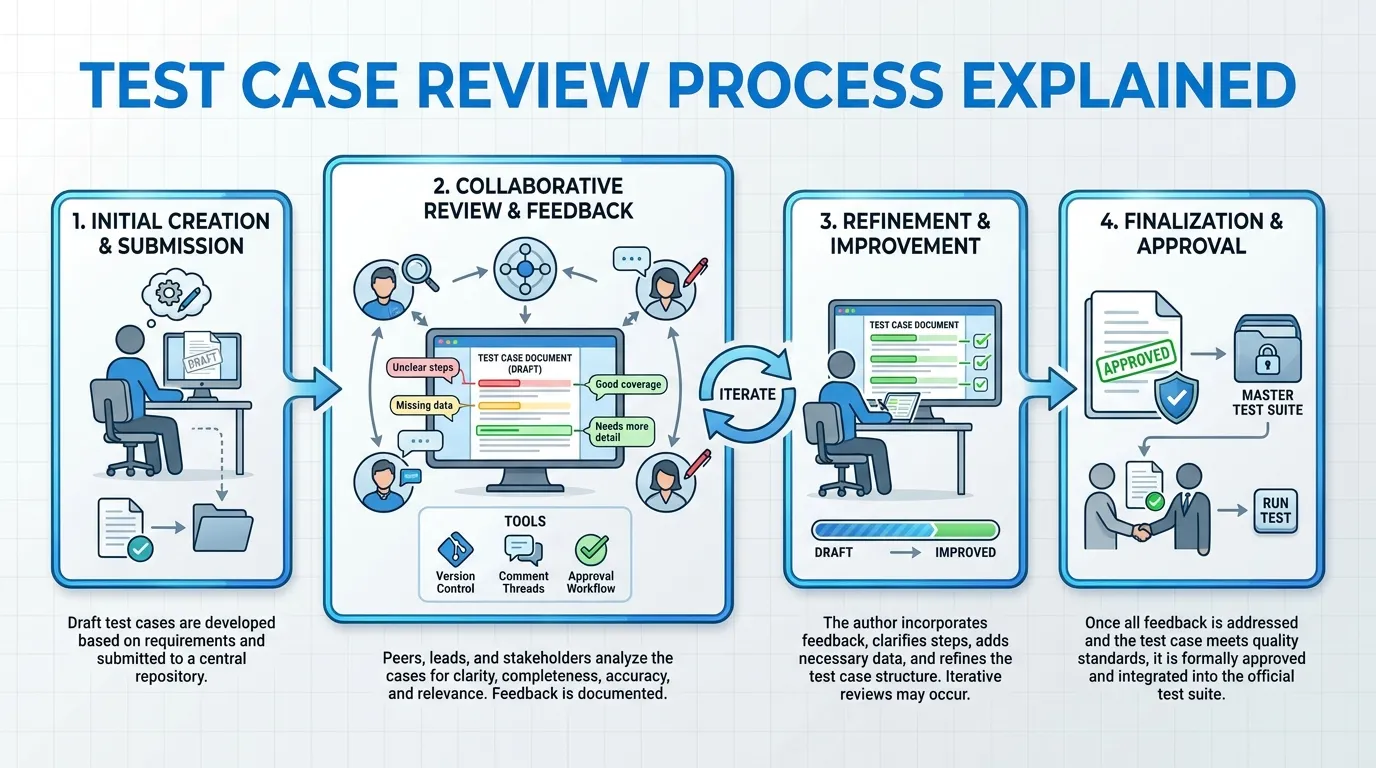

This is where a rigorous test case review process becomes the most critical quality gate in the lifecycle. This guide explores the modern mechanics of peer-reviewing tests, from manual logic audits to AI-driven coverage analysis.

1. Why Test Case Reviews are Non-Negotiable

A test case is code. Like any other code in the enterprise, it must be peer-reviewed to prevent regressions and technical debt.

1. The Cost of "False Greens"

The most dangerous failure in QA is a test that passes when it should have failed (a False Negative). This often occurs because the "Assertion" logic is flawed or the "Mock Data" is too permissive. A review process is the only way to catch these "Silent Failures" before they lead to production outages.

2. Eliminating Redundancy

At scale, it is common for different teams to write overlapping tests for the same microservice. A centralized or peer-based review process identifies these redundancies, keeping the CI/CD pipeline lean and reducing compute costs.

2. The Anatomy of an Effective Review Process

A modern review process in 2026 is no longer a "Meeting." It is a distributed, asynchronous workflow integrated directly into the Git lifecycle.

1. The Pre-Review Checklist (Self-Audit)

Before submitting a test for review, the author must verify:

- Decoupled State: Does the test clean up its data? Can it run in parallel without colliding with other tests?

- Atomic Logic: Does the test verify exactly one scenario, or is it a "Monolithic" script that is hard to debug?

- Wait Strategy: Does the test use "Smart Waits" (polling for state) or legacy "Hard Sleeps," which slow down the pipeline?

2. Peer Review Archetypes

- The Logic Review: A fellow SDET reviews the script to ensure the assertions are mathematically sound and the edge cases are covered.

- The Developer Review: The developer who wrote the feature reviews the test to ensure it aligns with the intended business logic and covers the "Hidden" failure paths of the code.

3. Reviewing Automated Tests as Code

In 2026, we review "Test Scripts" as rigorously as we review "API Handlers."

1. Assertions Audit

- The Trap: Asserting that a button "Exists" rather than asserting that the "Action succeeded."

- The Review: Ensuring that assertions are targeting the system state (e.g., database changes, API responses) rather than just UI ephemeral states.

2. Flakiness Detection

- The Strategy: During the review phase, the new test is run 20 times in a "Stress Environment" (Retry Loop). If it fails even once without a code change, the review is rejected.

- The Goal: Ensuring no flaky code ever enters the "Main" branch.

4. AI-Augmented Test Reviews

The most significant evolution in 2026 is the use of AI to perform the "First Pass" of a review.

1. Automated Logic Verification

AI agents analyze the "Requirements" (from Jira/Linear) and compare them against the "Test Script."

- The Result: The AI flags: "You missed the requirement for 'Guest Checkout' validation in this script; please add a test case for unauthenticated users."

2. Overlap and Redundancy Analysis

AI scans the entire existing test repository (5,000+ scripts) and flags: "This new test for 'Password Reset' is 92% identical to an existing test in the 'User-Auth' module. Consider merging or deleting."

5. Metrics of a Healthy Review Process

You cannot improve what you do not measure.

| Metric | Goal in 2026 | Why it Matters |

|---|---|---|

| Review Turnaround Time | < 2 Hours | Prevents bottlenecking the developer's merge. |

| Test Rejection Rate | 15% - 20% | High enough to show rigor, low enough to show training quality. |

| Post-Merge Flakiness | < 0.1% | Measures the effectiveness of the "Stress Environment" audit. |

| Requirement Coverage | 100% (Critical) | Ensures every business rule has a validated signature. |

6. Real-World Success: "The FinTech Security Audit"

A major global payment processor implemented a "Triple-Audit" review process for its core settlement engine.

- The Process: Every test required a review from an SDET, a Developer, and a Security Engineer.

- The Discovery: During a routine review, the Security Engineer identified that a test was "Mocking" the authentication layer too broadly, potentially masking a real token-validation bug.

- The Result: The test was rewritten to include a "Real-Handshake" validation. One month later, this exact test caught a P0 authentication regression that would have exposed 10,000 accounts.

7. Collaborative Review Culture

Software quality is a social endeavor.

- Constructive Feedback: Move away from "This is wrong" to "Could we improve the resilience here by using a dynamic locator?"

- The Quality Advocate: Every team has a rotating "Review Lead" responsible for ensuring that the testing standards are upheld throughout the sprint.

2026 Test Case Review Checklist

- Business Alignment: Does the test actually validate the core requirement?

- Assertion Depth: Are we asserting the outcome, or just the UI?

- Data Isolation: Does the test use unique, isolated data for every run?

- Error Handling: Does the test provide a clear, readable error message when it fails?

- Parallel Readiness: Is the test thread-safe and timing-independent?

- Wait Logic: No hard sleeps? Only dynamic polling?

- Complexity Check: Is the test script clean, readable, and free of "Magic Numbers"?

- AI Clearance: Has the AI reviewer flagged any logic gaps or redundancies?

Summary

- Tests are Code: Treat them with the same rigor, versioning, and peer-review as your product code.

- Assertions are the Heart: Audit your assertions to ensure they are mathematically sound.

- Stress the Flakiness: Run new tests in a 20x loop before approving them.

- Leverage AI: Use AI for shift-left reviews of logic gaps and redundancy.

- Collaborate across Roles: Involve developers and security leads in the review process for high-risk features.

- Measure Success: Track turnaround times and post-merge stability.

Conclusion

The test case review process is the ultimate filter between a high-performing engineering team and a chaotic one. In 2026, the volume of automated testing is so high that human oversight must be targeted and strategic. By moving beyond simple "Rubber-stamping" and embracing a data-driven, multi-role review workflow, organizations can build a test suite that is not just a safety net, but a foundational engine of developer confidence. In the age of continuous everything, the review process is the pilot that ensures the machine stays on course.

FAQs

1. Who should review test cases? Ideally, a peer QA engineer (SDET) and the developer who wrote the feature. For security-critical features, a security lead should also be involved.

2. How long should a test case review take? In a high-velocity environment, the "Turnaround Time" should be under 2 hours to prevent blocking the deployment pipeline.

3. What is "Test Case Redundancy"? When two or more tests validate the same logic in different ways, leading to increased CI/CD costs and slower feedback without adding quality value.

4. Can you use AI to review tests? Yes. In 2026, AI tools can identify logic gaps, redundancy, and performance issues in test scripts before a human even looks at them.

5. What is a "False Green"? A test that passes even though the underlying feature has a bug, usually due to flawed assertion logic or incorrect "Mock" data.

6. How do you handle "Flaky" tests during a review? By running the new test 20-50 times in a "Retry Loop." If it fails even once for a non-environmental reason, it is rejected.

7. Why is "Wait Logic" a focus during reviews? Because "Hard Sleeps" make tests slow and brittle. Modern reviews enforce "Dynamic Polling" (waiting for an element to appear) instead.

8. What is "Data Isolation" in a test review? Checking that the test uses its own unique data objects (e.g., a unique user ID) so it doesn't interfere with other tests running in parallel.

9. Is "Manual Testing" documentation reviewed too? Yes. Even manual exploratory charters should be reviewed to ensure the tester is focusing on the highest-risk areas of the application.

10. What is an "Assertion Audit"? A deep dive into exactly what a test is verifying to ensure it’s checking the final system state, not just a superficial UI change.

11. How does "Shift-Left" apply to reviews? By reviewing the "Test Plan" or "Test Scenario" before the code is even written, ensuring alignment at the requirement stage.

12. What if a developer disagrees with a test case logic? This is a success! Disagreement during a review identifies a misunderstanding of the requirements before the code is finalized.

13. Do you review "Smoke Tests" differently? Yes. Smoke tests are reviewed for speed and reliability, as they are the first gate in the CI/CD pipeline and must never be flaky.

14. What are "Magic Numbers" in test scripts?

Hardcoded values (like sleep(5000) or wait_for(30)) that should be replaced with named constants or configuration variables.

15. Can a test review process be "Automated"? Partially. AI and Linters can catch 60% of technical issues, but human review is still required to validate the intent and context of the test.