Enterprise Test Data Management Strategies

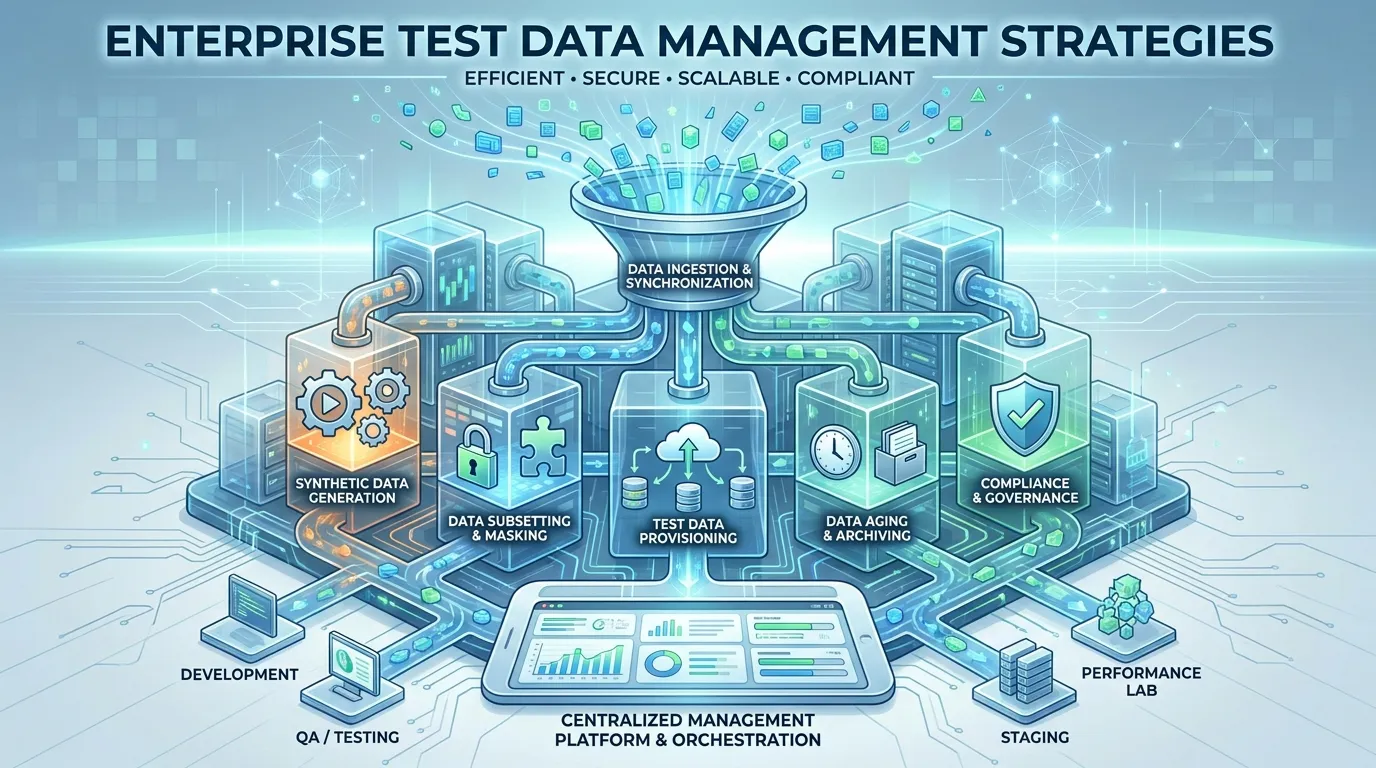

In the modern enterprise, data is the lifeblood of every application. But for Software Quality Assurance (QA) and development teams, data is often the biggest bottleneck. We have all been there: a critical test fails not because of a bug in the code, but because the test data was stale, missing, or corrupted. In a world of microservices and global cloud deployments, managing this data at scale is one of the most complex challenges a technical organization faces.

Enterprise Test Data Management (TDM) is no longer just about taking a database dump and loading it into a QA environment. It is about speed, privacy, and precision. With strictly enforced global regulations like GDPR, CCPA, and HIPAA, the old habit of using "real" production data for testing is not just a bad practice—it's a legal liability. In this guide, we will dive deep into the advanced strategies for TDM in 2026, exploring how to leverage synthetic data, automated masking, and self-service orchestration to turn your data from a bottleneck into a competitive advantage.

The Evolution of TDM in Cloud-Native Environments

For decades, TDM was a manual, slow-moving process. A developer would request data, a DBA would prepare a subset, and days (or weeks) later, the data would arrive. In a modern CI/CD environment where organizations deploy dozens of times per day, this old model is completely broken.

Shifting TDM "Left" into the Pipeline

Modern TDM must be "Shifted Left"—integrated directly into the development cycle. This means that test data should be provisioned automatically as part of the CI/CD pipeline. When a developer creates a new Pull Request, the pipeline should automatically spin up an ephemeral environment and populate it with the exact, isolated data needed for that specific feature. This "Data-as-Code" approach ensures that tests are always running against a clean, predictable state, eliminating the "Flaky Test" syndrome caused by shared, dirty environments.

The Complexity of Distributed Microservices

In a monolithic architecture, TDM was relatively simple. In a microservices architecture, a single user transaction might touch ten different databases. Ensuring "Referential Integrity" across these distributed systems is a massive challenge. If you refresh the data in Service A but not Service B, your end-to-end tests will fail. Advanced enterprise strategies involve Test Data Orchestration, where a centralized service coordinates data refreshes across all related services simultaneously, ensuring a consistent world-view for every test execution.

Data Masking vs. Synthetic Generation: A Comparative Guide

To move fast without risking a data breach, enterprises use two primary techniques: Data Masking and Synthetic Data Generation. Understanding when to use which is the key to a successful test data management enterprise strategy.

Persistent Data Masking (Obfuscation)

Data masking involves taking real production data and scrambling the sensitive parts (names, emails, credit card numbers) while keeping the overall structure and relationships intact.

- Best for: Regression testing where "high fidelity" is crucial. If your app has complex business logic that depends on real-world data patterns, masking is the best way to maintain that realism without exposing PII (Personally Identifiable Information).

- Challenge: It still requires a production database as a starting point, which can be massive and slow to move. Over-masking can also "break" the data, making it useless for testing certain edge cases.

Synthetic Data Generation (The Privacy Champion)

Synthetic data is computer-generated data that mimics the statistical properties of real data but contains zero real information.

- Best for: Early-stage development, security testing, and "Zero-Trust" environments. Since there is no actual user data involved, synthetic data can be moved across borders (e.g., from an EU production site to an offshore dev team) without any legal risk.

- Benefit: AI-driven synthetic data tools can now generate data that perfectly mirrors production "hotspots"—areas with high error rates or complex logic—allowing you to test scenarios that haven't even happened in production yet.

Solving the GDPR/HIPAA Compliance Challenge in QA

In 2026, privacy is non-negotiable. An enterprise TDM strategy must be "Secure by Design."

Automated Data Discovery and Classification

You cannot protect data if you don't know where it is. Advanced TDM platforms use AI to scan your entire database ecosystem, automatically identifying PII and sensitive health information. Once identified, these fields are automatically flagged for masking or replacement. This eliminates the "Human Error" risk where a developer accidentally includes a real email address in a QA environment.

Row-Level Security and Data Residency

For global enterprises, data residency laws (like those in Germany or China) mandate that data cannot leave the country. TDM governance must ensure that data subsets stay within their respective regions. By using Service Virtualization, you can test applications in one region while "mocking" the data responses from a database in another, maintaining compliance while allowing global development teams to collaborate.

Self-Service Data Provisioning for Dev Teams

The ultimate goal of enterprise TDM is to get the "DBA out of the way."

TDM as a Service (TDMaaS)

Scalable organizations provide developers with a self-service portal (or API) where they can request the specific data they need. Need a "User with 3 expired credit cards and a pending refund"? Instead of spending two hours manually creating that state, the developer sends a request to the TDM portal, which instantly generates or clones that specific scenario. This "On-Demand" capability is what allows top-tier engineering teams to maintain high velocity without sacrificing quality.

Snapshotting and Versioning Test Data

Treating data like code means it should have versions. Tools like Delphix or specialized container data volumes allow you to take "Snapshots" of your test data. If an E2E test fails, you can "Bookmark" the exact state of the database at that moment. This allows developers to "Travel back in time" to see exactly why the failure occurred, rather than trying to replicate a transient data state that has already changed.

Step-by-Step: Designing a Compliant TDM Framework

Step 1: The Data Audit

Map out every data source, from legacy mainframes to modern NoSQL clusters. Identify which systems share data and where the "source of truth" lives for critical entities like Customers or Orders.

Step 2: Establish Privacy Standards

Define your masking rules globally. Names should always be replaced with realistic-looking fake names; emails should always point to a internal "sinkhole" domain. Ensure these rules are strictly enforced across all environments.

Step 3: Implement Automation and Self-Service

Choose a TDM platform that integrates with your CI/CD toolchain. Start by automating the data refresh for your most critical regression environment, then move toward providing ephemeral, on-demand data for every developer.

Step 4: Monitor and Clean

Data "rots" over time. Implement automated "Data Health Checks" that verify referential integrity and compliance daily. Regularly "Prune" your test databases to keep them small, fast, and cost-effective.

Summary

In summary, a modern test data management enterprise strategy is a blend of technology and policy.

- Automate Everything: Integrate data provisioning into your CI/CD pipelines to eliminate manual bottlenecks.

- Privacy First: Use AI-driven masking and synthetic data to ensure 100% compliance with global regulations.

- Referential Integrity: Use orchestration to keep data consistent across distributed microservices.

- Empower Developers: Provide self-service tools so teams can get the data they need when they need it.

- Treat Data as Code: Use versioning and snapshotting to make data-related bugs easy to debug.

Conclusion

Test data is often the "final frontier" of automation. Organizations that master TDM will see a significant increase in their deployment velocity and a dramatic decrease in "false positive" test failures. In the data-driven world of 2026, managing your test data with precision and security is not just a technical task—it is a business imperative. By shifting TDM left and embracing intelligent data generation, you can ensure that your QA process is as fast and agile as the software it supports.

FAQs

1. Is synthetic data as good as "real" data for testing? For 90% of scenarios, yes. Modern AI-driven synthetic data is statistically identical to real data. However, for deep debugging of subtle, data-driven production bugs, masked production data (high-fidelity) is still superior.

2. How do we handle test data for third-party API integrations? Use Service Virtualization. Instead of trying to manage data in an external system (which you don’t control), mock the API responses with the exact data scenarios you need. This makes your tests faster and more reliable.

3. What is the biggest cost associated with TDM? Storage and infrastructure. Keeping multiple copies of massive enterprise databases is expensive. This is why "Data Subsetting"—creating small, representative samples of your data—is a critical TDM skill.

4. Can TDM be handled by the developers themselves? While developers should use the TDM tools, the standards and infrastructure are usually best managed by a centralized Quality Engineering or DataOps team to ensure security and compliance standards are met across the whole company.

5. How do we handle "Referential Integrity" in NoSQL databases? It's harder than in SQL, but just as important. You must use orchestration scripts that understand the "Links" between your NoSQL documents and update them in sync. Many modern TDM tools now have built-in support for popular NoSQL stores like MongoDB and Cassandra.

6. Does TDM impact the speed of the CI/CD pipeline? If done poorly, yes. If done well (using ephemeral snapshots and subsets), it should actually speed up the pipeline by eliminating the time developers spend manually setting up data.

7. How do we ensure that synthetic data covers "Edge Cases"? You must manually define your business rules and constraints within your synthetic data generator. For example, "A user cannot have a negative age" or "A shipping address must match a valid ZIP code." The best generators allow you to inject these rules into the AI models.