Validating Data Warehouses: Snowflake, BigQuery, and Databricks (2026)

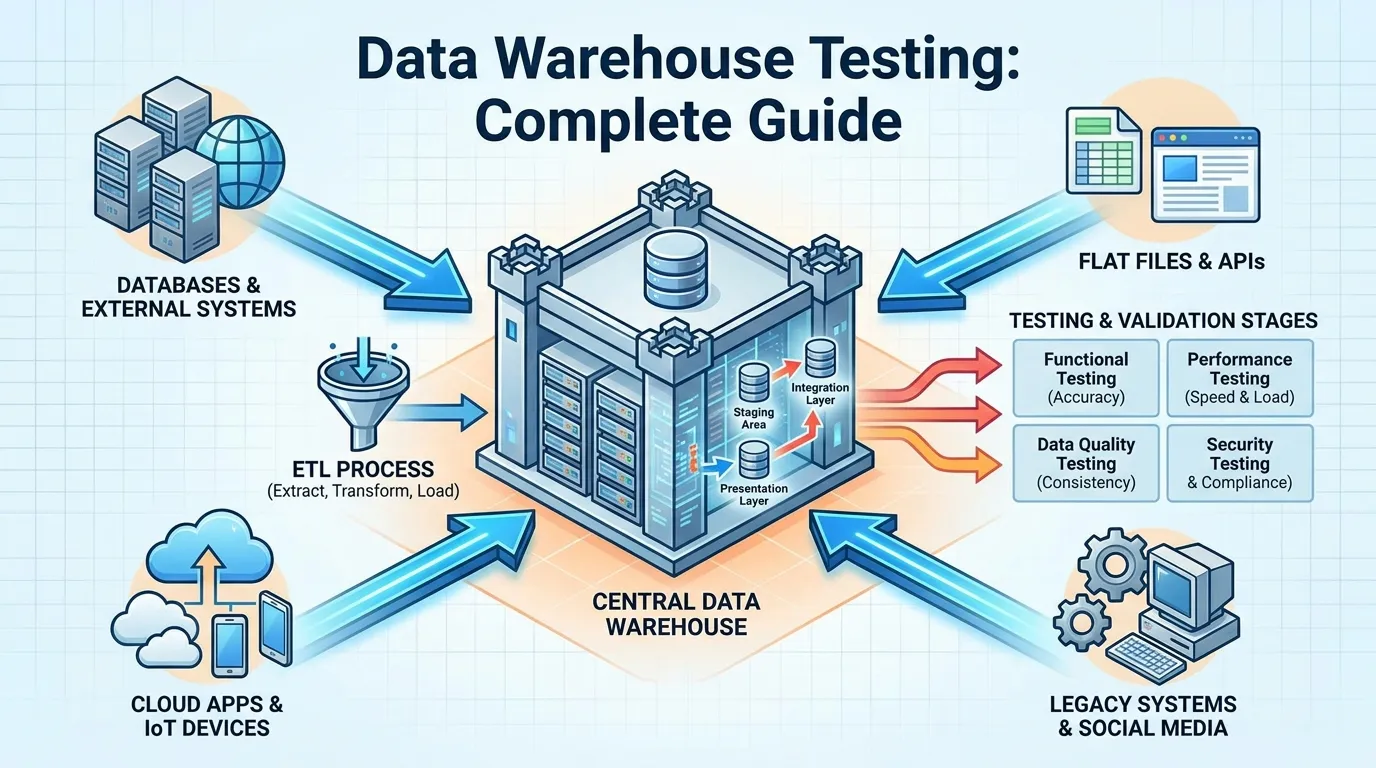

The data warehouse has evolved from a simple repository for historical reports into the "Brain" of the modern enterprise. In 2026, it is the central node where structured business data meets unstructured AI training sets, and where complex transformations feed real-time analytics. As enterprises consolidate their data onto platforms like Snowflake, BigQuery, and Databricks, the stakes for technical validation have reached an all-time high.

A single schema drift or a misconfigured cost-optimization policy in the warehouse can not only lead to incorrect insights but can drain an annual budget in a matter of days. Quality Assurance (QA) in the warehouse is no longer just about "Matching the Numbers"; it is about validating the architecture, the performance, and the "FinOps" (Financial Operations) of the data at rest. This guide provides a technical framework for data warehouse validation across the three industry giants of 2026.

Comparing the "Big Three": A Technical Snapshot for QA

Before testing, it is critical to understand the architectural differences that impact validation:

| Feature | Snowflake | BigQuery | Databricks |

|---|---|---|---|

| Architecture | Multi-cluster, shared data. | Serverless, distributed. | Data Lakehouse (Delta Lake). |

| Pricing | Credit-based (Compute). | Data scanned (Query-based). | DBU (Databricks Units) + Cloud Infra. |

| QA Focus | Scaling efficiency & Data cloning. | Scan volume & Partitioning. | Cluster configuration & Photon engine. |

| Data Quality | Data Metric Functions (Native). | SQL Procedural validation. | Delta Live Tables (DLT). |

Validating Data Quality at Rest

Once data reaches the warehouse, it must be validated against its "Intended State."

1. Schema Enforcement and Non-Nullable Checks

- The Risk: A transformation pipeline accidentally inserts a "Null" value into a primary key column, breaking downstream joins and reports.

- The Strategy: Utilizing dbt to enforce strict schema rules.

- The Test: "Null-Check Regression." Periodically running a dbt test sweep over all "Gold" tables to ensure that non-nullable columns have remained clean.

2. Referential Integrity at Scale

- The Test: "The Orphan Record Hunt." Verifying that every transaction in a fact table has a corresponding entry in its parent dimension table (e.g., every

Sales_IDhas a validRegion_ID). - Validation: In 2026, we use Great Expectations to automate these referential integrity checks across trillions of rows without having to write manual SQL for every table pair.

Performance Benchmarking and Cost Optimization: The FinOps Layer

In 2026, a "Fast" query is only a success if it is also a "Cheap" query. QA must validate the efficiency of the underlying SQL.

1. Query Profiling in Snowflake

- The Test: "The Spilled Query Audit." Using Snowflake’s Query Profile to identify queries that "Spill" to local or remote disk.

- The Validation: If a query spills to disk, it indicates that the virtual warehouse size is too small or the join logic is inefficient. QA should flag these as "Performance Defects."

2. Partitioning and Clustering in BigQuery

- The Test: "Scan Volume Verification." BigQuery costs are driven by the amount of data scanned.

- The Validation: QA must verify that every query against a multi-terabyte table includes a "Where" clause that targets a partitioned column (e.g.,

event_date). If a query triggers a "Full Table Scan," the test fails.

3. Cluster Optimization in Databricks

- The Test: "Driver and Worker Balance."

- The Validation: Monitoring the Databricks Spark UI to ensure that the workload is evenly distributed across worker nodes and that the "Driver" node is not becoming a bottleneck.

Detecting Schema Drift and Semantic Errors

Data changes over time (Drift), and the meaning of data can shift (Semantic Error).

1. Schema Drift Detection

- The Automated Test: Running daily "Schema Snapshots" and comparing them against the baseline. If a column type changes from

INTtoSTRING, an alert is fired before the downstream AI model consuming that data crashes.

2. Semantic Validation

- The Scenario: The business changes the definition of "Active User" from "Someone who logged in" to "Someone who made a purchase."

- The QA Test: "Metric Parity Validation." Comparing the old metric calculation against the new one in a staging warehouse to ensure the "Historical Trend" is preserved and explained.

The Observability Revolution: Monte Carlo and Metaplane

In 2026, we no longer rely solely on manually written tests. We use Data Observability platforms.

1. Detecting "Unknown Unknowns"

- Monte Carlo: Uses ML to monitor the "Volume" and "Freshness" of your warehouse tables.

- The Validation: If the

Daily_Revenuetable usually gets 50,000 updates an hour but suddenly stops being updated, Monte Carlo flags it as a "Freshness Incident" even if no explicit test was written for it.

2. Lineage-Aware Impact Analysis

- Metaplane: Maps the "End-to-End Lineage" of data.

- The Validation: If a quality test fails on a "Bronze" (Raw) table, Metaplane shows the QA team exactly which "Platinum" dashboards and AI models are now "Untrusted."

Zero-Trust Data Warehouse Security Validation

By 2026, security is a core quality attribute of the warehouse.

1. Row-Level Security (RLS) Verification

- The Scenario: A user from the "Marketing" role should only see customer data from their specific region.

- The Test: "Identity Ambiguity Validation." QA attempts to run a query using the identity of a marketing user and verifies that the warehouse engine correctly filters the rows without returning a "Permission Denied" error or leaking unauthorized data.

2. Dynamic Data Masking (DDM) Validation

- Engineering: Masking PII (like Emails or Credit Cards) based on the user's role.

- The Test: Verifying that for a "Data Analyst" role, the email field returns

user_XXXX@company.com, while for a "System Admin" role, it returns the full, unmasked value.

Testing Data Sovereignty and Residency in Global Warehouses

Global enterprises often use "Multi-Region" Snowflake or BigQuery setups.

1. The Regional Leakage Test

- Verification: Confirming that data belonging to a "UK Citizen" is physically stored in the

eu-west-2(London) region and has not been replicated to aus-east-1(Virginia) bucket during a background sync process. - Strategy: Automated auditing of the "Cloud Storage Metadata" to ensure the physical bucket location matches the legal residency requirements.

2. Encryption Key Sovereignty

- Test: Verifying the "Kill Switch." If the customer revokes their "Customer-Managed Key" (CMK) from the cloud HSM, do the warehouse queries against that data fail immediately?

Essential Data Warehouse Validation Tools for 2026

| Tool | Core Use Case | Primary Benefit |

|---|---|---|

| dbt-expectations | Transformation Testing | A massive library of Great Expectations-style tests that run natively inside dbt. |

| Monte Carlo | Unified Observability | The "Control Plane" for the data warehouse, providing ML-driven anomaly detection. |

| Atlan | Active Metadata & Cataloging | Connects data quality metrics directly to the business glossary for better collaboration. |

| Soda | Data Quality Monitoring | Provides a simple, cross-platform language for writing and running warehouse checks. |

| Select.dev | Snowflake FinOps | Specialized in identifying and optimizing expensive, inefficient queries in Snowflake. |

Best Practices for 2026 Warehouse QA

- Test inside the Warehouse: Whenever possible, run your validation queries where the data lives (e.g., using Snowflake Data Metric Functions) to avoid expensive data egress.

- Enforce "Cost Budgets" on Tests: Even a testing script can be expensive. QA should monitor the "Cost per Test Run" to ensure the validation suite itself is efficient.

- Automate Metadata Extraction: Use tools like Atlan to automatically pull metadata and lineage, giving you a real-time map of your warehouse health.

- Use "Zero-Copy Cloning": In Snowflake, use cloning to create an identical copy of production data for testing without incurring additional storage costs.

- Benchmarking against "Ground Truth": Periodically run a "Golden Query" (a query known to be 100% accurate) and compare its output against the results of a new, complex transformation.

- Collaborate with FinOps: Treat query efficiency as a quality metric. A query that takes 10 minutes and $100 is a failure if there is a way to do it in 1 minute and $10.

Summary

- Quality is Modular: Test at the schema, row, and business-logic levels.

- Costs are KPIs: In 2026, efficient SQL is a primary quality attribute.

- Architecture Matters: Tune your tests for the specific quirks of Snowflake, BigQuery, or Databricks.

- Observability is Protection: Use ML-driven tools to find the anomalies your manual tests missed.

- Lineage is Clarity: Always understand the "Blast Radius" of a data quality failure.

Conclusion

The data warehouse of 2026 is a high-performance, high-cost, and high-stakes environment. It is the engine that drives every dashboard, AI model, and business decision. However, that engine is only as reliable as the validation framework that protects it. By moving beyond simple row-counts and embracing a holistic data warehouse validation strategy—inclusive of architecture, performance, and cost—QA organizations can ensure that the "Brain" of the enterprise remains sharp, accurate, and cost-effective. In the age of big data, your job is not just to count the rows; it is to ensure that every single row is worth the price of its storage.

FAQs

1. What is "Zero-Copy Cloning"? A feature in Snowflake that allows you to create a logical copy of a table or database without actually duplicating the physical data, allowing for cost-effective testing.

2. How do BigQuery "Partitioned Tables" save money? They allow queries to "Prune" or skip entire segments of data that don't match the partition criteria (e.g., date), significantly reducing the volume of data scanned and thus the cost.

3. What is "Data Drift"? The gradual change in the distribution or meaning of data over time, which can eventually lead to AI models becoming inaccurate even if the "Software" is working perfectly.

4. What is a "Data Metric Function" (DMF)? A native Snowflake feature that allows you to define and run data quality checks directly on a table, with the results being stored in a system view for easy monitoring.

5. How is Databricks different from Snowflake? Databricks is built on the "Lakehouse" concept (delta lake), giving more engineer-level control over Spark clusters, while Snowflake is a more "Managed Service" focused on ease of use.

6. What is "FinOps" in the context of data? Financial Operations for data, focusing on the cultural and technical shift to balance cloud cost with business performance.

7. Why is "Lineage" important in a data warehouse? It shows you the "Ancestry" of a data point—where it came from and which downstream reports or models rely on it.

8. What is "Referential Integrity"? The logical consistency between related tables (e.g., ensuring a "Sales" record doesn't exist for a "Product" that isn't in the product catalog).

9. Can I use Great Expectations with Snowflake? Yes. GX has deep integrations with all major data warehouses, allowing it to run SQL-based validations natively.

10. What is "Active Metadata"? Metadata that is not just stored in a catalog but is used to "Trigger" actions (e.g., automatically flagging a PII column or firing a Slack alert when a freshness check fails).

11. What is "Row-Level Security" (RLS)? A security feature that allows the warehouse to restrict access to specific rows in a table based on the characteristics of the user executing the query (e.g., their region or department).

12. How does "Dynamic Data Masking" protect PII? It obscures sensitive data in the result set of a query "on-the-fly" based on the user's permissions, without changing the data that is physically stored on the disk.

13. What is a "Customer-Managed Key" (CMK)? An encryption key that is owned and managed by the customer in their own Key Management Service (KMS), providing them with total control over who can decrypt their data in the warehouse.

14. Why is "Data Freshness" a quality metric? Because data that is accurate but "Stale" (too old) can lead to incorrect decisions in fast-moving industries like finance or e-commerce.

15. Can I use dbt effectively with Databricks? Yes. dbt has a specialized adapter for Databricks that allows it to utilize Delta Live Tables and the Photon engine for high-performance transformations and testing.