Building Scalable QA Pipelines in Large Organizations

Imagine a major global shipping port. Thousands of containers arrive every hour, destined for hundreds of different locations. If every single container had to be manually inspected by one person, the entire global supply chain would grind to a halt within minutes. To prevent this, ports use automated scanners, intelligent sorting systems, and specialized lanes to keep traffic flowing efficiently.

Modern enterprise software development is very similar. In a large organization, you don't just have one application; you have hundreds of microservices, thousands of developers, and a constant stream of code commits. If your Quality Assurance (QA) process is a "manual inspection station," it becomes the ultimate bottleneck. To survive and thrive in 2026, organizations must move beyond simple testing and build scalable QA pipelines that function like a high-tech shipping port—fast, resilient, and virtually invisible.

Building these pipelines isn't just about hiring more testers or writing more scripts. It requires a fundamental rethink of how quality is defined, measured, and delivered at scale. In this guide, we will explore the architectural pillars, technical strategies, and cultural shifts required to build a QA pipeline that doesn't just keep up with development but accelerates it.

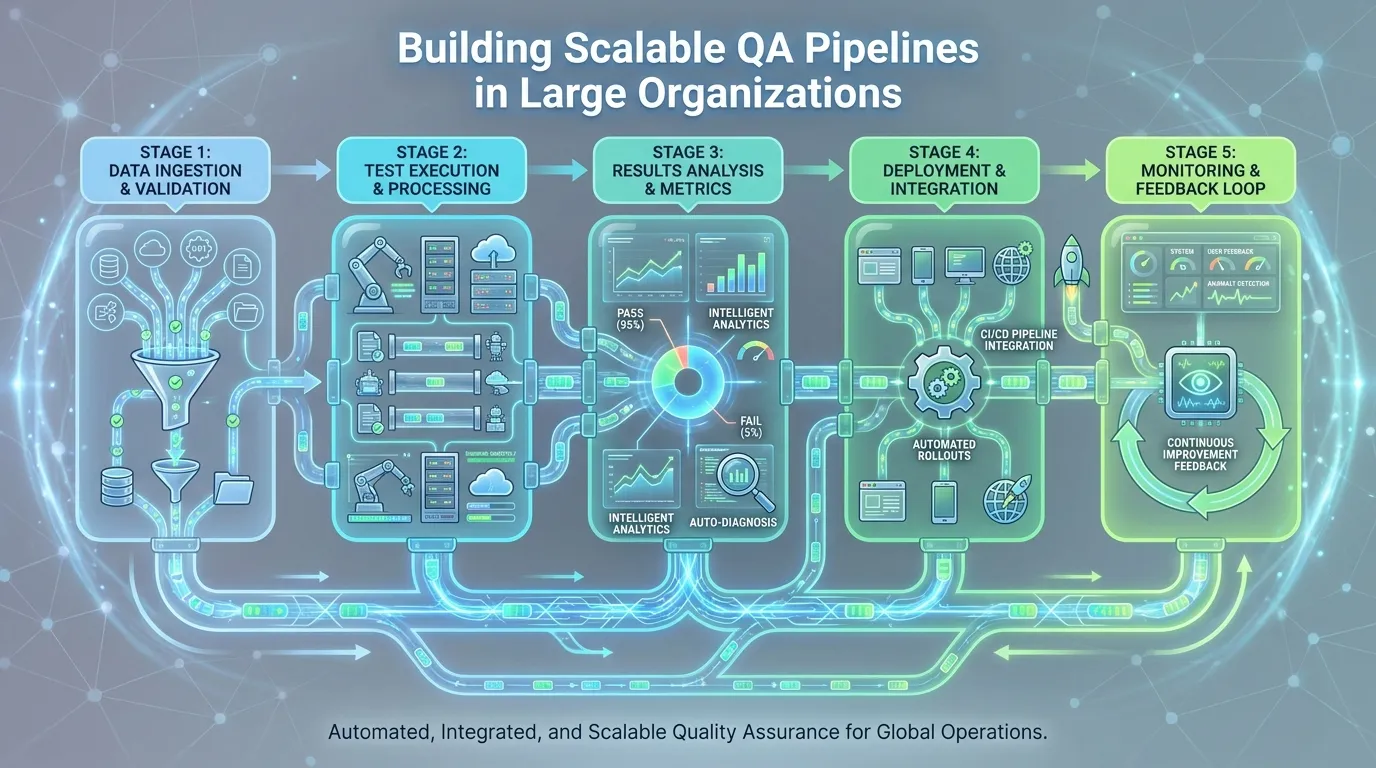

The Core Anatomy of a Scalable QA Pipeline

A pipeline is more than just a sequence of steps; it is a living system. For a pipeline to be truly scalable, it must be modular, ephemeral, and intelligent.

Orchestration vs. Execution

One of the most common mistakes in large organizations is conflating "orchestration" (the logic of when and how to run tests) with "execution" (the actual running of the tests). In a scalable model, these must be decoupled.

Orchestration is handled by tools like Jenkins, GitLab CI, or GitHub Actions. These tools manage the workflow, dependencies, and environment provisioning. Execution, on the other hand, should happen in a distributed, on-demand infrastructure. By separating these layers, you can swap out testing tools or scale your execution capacity without rewriting your entire pipeline logic.

The Role of Ephemeral Environments

Traditional "static" QA environments are a relic of the past. In a large organization, having a single "Staging" environment creates "environmental contention." If Team A is testing a new feature on Staging, Team B has to wait. This is the opposite of scalability.

Advanced scalable QA pipelines utilize ephemeral environments. These are short-lived, containerized environments (using Docker and Kubernetes) that are spun up specifically for a single test run or pull request. The moment the tests are finished, the environment is destroyed. This ensures that every test run happens in a "clean" state, eliminating the "it worked on my machine" excuses and allowing dozens of teams to test simultaneously without interference.

Standardized Toolchains and Custom Wrappers

Scalability requires consistency. If ten different teams are using ten different testing frameworks with ten different reporting formats, your organization's quality data will be fragmented.

Large organizations succeed by creating a "Standardized Toolchain." However, generic tools often don't meet specific enterprise needs. Many organizations build Custom Wrappers around popular tools (like Selenium or Playwright). These wrappers include built-in company standards for logging, reporting, and error handling. This allows new teams to "plug and play" into the existing pipeline without having to configure everything from scratch.

Technical Pillars of Scalability

Once the foundation is set, you need the technical "muscle" to handle the volume of an enterprise codebase.

Parallelization Strategies for Global Teams

If your regression suite takes eight hours to run, you cannot deploy ten times a day. Parallelization is the only way to achieve speed at scale. This involves breaking your test suite into smaller "chunks" and running them concurrently across hundreds of nodes.

Advanced strategies go beyond simple threading. They use Distributed Test Execution. For example, a test suite of 5,000 tests can be split into 50 groups of 100. By running these on 50 different cloud containers simultaneously, an eight-hour run can be completed in less than 15 minutes. For global organizations with teams in different time zones, this ensures that the "build is always green" and feedback is delivered in minutes, not hours.

Leveraging Infrastructure as Code (IaC) for Pipeline Stability

A pipeline is only as stable as the infrastructure it runs on. Manually configured servers are prone to "configuration drift," where two supposedly identical environments behave differently over time.

In a scalable QA pipeline, infrastructure must be treated as code. Using tools like Terraform or Pulumi, you define your testing servers, databases, and network configurations in code files. This ensures that every time a pipeline environment is created, it is an exact, pixel-perfect replica of the production environment. IaC also allows you to version-control your infrastructure, making it easy to roll back changes if a configuration update breaks the pipeline.

Implementing Intelligent Release Gating

As organizations scale, "test everything" becomes an impossible strategy. Imagine an application with 100 microservices. If every minor update to the "Login" service triggers a full regression of the "Shipping" and "Accounting" modules, your pipeline will be permanently clogged.

Risk-Based Automated Deployment

The solution is Intelligent Release Gating. This involves using metadata and impact analysis to determine exactly which tests need to run for a specific change. By analyzing the dependency graph of your code, the pipeline can identify which services are actually affected by a commit. In 2026, many enterprise pipelines use AI models to assign a "Risk Score" to every pull request. A low-risk change (like a UI color update) might only trigger basic linting and unit tests, while a high-risk change (like a database schema update) triggers the full suite.

Predictive Analytics to Skip "Safe" Tests

Advanced pipelines leverage historical data to optimize their test suites. If a particular set of tests has passed 1,000 times without a single failure despite changes in the related code, those tests might be "oversaturated." Predictive analytics models can flag these tests for "Review" or suggest skipping them in non-critical builds. This isn't about ignoring bugs; it’s about allocating your massive testing resources to the areas of highest probability for failure.

Overcoming Scalability Bottlenecks

Even with the best tools, large organizations often face "hidden" bottlenecks that stall the pipeline.

Solving the "Merge Hell" with Continuous Integration

In a large organization, dozens of developers might be committing code at once. If your pipeline only runs after a merge, you enter "Merge Hell," where one developer's bug breaks the build for everyone else.

Scalable pipelines use Pre-Merge Validation. Every pull request must pass a subset of automated tests before it can be merged into the main branch. This ensures that the primary codebase remains clean and deployable at all times. To handle the volume, organizations use "Merge Queues" (like those provided by GitHub or GitLab) to automate the sequence of merges, ensuring that code is tested in the exact order it will be integrated.

Managing Tool Sprawl in Large Organizations

When an organization grows through acquisitions or rapid hiring, "Tool Sprawl" is inevitable. Team A uses Selenium, Team B uses Cypress, and Team C uses a legacy commercial tool. This makes it impossible to have a unified view of quality.

To overcome this, organizations establish a Quality Center of Excellence (CoE). The CoE doesn't just mandate tools; it provides "Standardized Services." For example, the CoE might provide a centralized "Test Data Service" or a shared "Dashboarding Platform" (like Grafana or Allure) that all tools must integrate with. This allows individual teams some flexibility while ensuring that the organization as a whole has clear, standardized metrics.

Quality Engineering (QE) vs. Traditional QA

The most significant change in building scalable QA pipelines is not technical; it's organizational. We are seeing a massive shift from "Quality Assurance" to "Quality Engineering."

Building a Culture of Shared Responsibility

In a traditional QA model, the tester is seen as the "gatekeeper" who holds up the release. In a Quality Engineering model, quality is everyone's responsibility. Developers write unit and integration tests as part of their feature work. Operational teams provide the infrastructure for testing. Product managers define "Acceptance Criteria" that are clear enough to be automated. When the entire team "owns" quality, the pipeline stops being a bottleneck and starts being a safety net.

The SDET’s Role in Pipeline Optimization

The Software Development Engineer in Test (SDET) is the architect of the pipeline. Their job is not to manually find bugs, but to build the tools and frameworks that allow bugs to be found automatically. In a large organization, SDETs focus on performance optimization of the test suite, reducing execution time, and building AI-driven failure analysis tools. They are the "Engineers of the Engine," constantly fine-tuning the pipeline to handle more volume with less friction.

Advanced Monitoring and Observability

A scalable pipeline doesn't stop once the "Deploy" button is pressed. In an enterprise setting, you must monitor the health of the pipeline itself.

Real-Time Feedback Loops for Developers

Speed is useless if the feedback is buried in a 50MB log file. Advanced pipelines use Observability Dashboards to provide real-time visualization of test results. Designers, developers, and product owners should all be able to see the status of the pipeline at a glance. By integrating these results into Slack or Microsoft Teams, you ensure that the moment a failure occurs, the right person is notified with a direct link to the failing line of code.

Tracing Defects from Pipe to Production

In complex microservices architectures, a bug in Production might be caused by a subtle interaction between three different services. Scalable pipelines use Distributed Tracing (like Jaeger or Honeycomb) to follow a request as it moves through the entire stack. This allows teams to replicate production bugs in their localized test environments accurately, reducing the "Discovery-to-Fix" time significantly.

Actionable Framework: Scaling Your Pipeline in 90 Days

If you are currently struggling with a slow, fragile pipeline, here is a 90-day roadmap to transform your quality architecture.

Month 1: Audit and Standardization (Discovery Phase)

- Inventory Your Tools: Map out every testing tool used across the organization.

- Identify the Bottlenecks: Measure the "Lead Time for Changes." Where is code getting stuck?

- Define the "Golden Path": Select one primary toolchain (e.g., Playwright + GitHub Actions) and create a template that other teams can copy.

Month 2: Automation and Cloud Integration (Scaling Phase)

- Implement Ephemeral Environments: Move away from static staging servers. Automate the creation of test environments using Docker.

- Enable Parallel Execution: Integrate with a cloud-based testing grid to run your tests in parallel.

- Set Up centralized Reporting: Ensure all teams are reporting quality metrics to a single dashboard for organizational transparency.

Month 3: Intelligence and Optimization (Intelligence Phase)

- Implement Risk-Based Gating: Start using impact analysis to skip unnecessary tests in non-critical builds.

- Add AI Failure Analysis: Use ML-driven tools to automatically categorize test failures into "Infrastructure," "Bug," or "Flaky."

- Institutionalize Quality Engineering: Shift your metrics from "Bug Counts" to "Pipeline Speed" and "Defect Leakage."

Summary

Building scalable QA pipelines is the secret weapon of high-velocity enterprise teams.

- Decouple Execution: Separate your CI orchestration from the actual test execution to enable massive scaling.

- Go Ephemeral: Eliminate environment contention by using short-lived, containerized environments.

- Shift to QE: Move from a "Testing Phase" to a continuous "Quality Engineering" mindset.

- Automate Gating: Use risk-based analysis and predictive analytics to optimize your test cycles.

- Standardize Metrics: Centralized reporting is crucial for managing quality across dozens of teams.

Conclusion

The future of software delivery is not just about writing code faster; it's about validating that code faster and more accurately. In a large-scale enterprise, a slow QA pipeline is a form of technical debt that compounds over time. By investing in the architectural and cultural pillars of scalability, you turn your pipeline from a source of frustration into a competitive advantage.

Scaling your QA pipeline is a journey of continuous improvement. The tools will change, and the methodologies will evolve, but the core principle—making quality invisible and ubiquitous—will always remain the hallmark of an industry-leading organization.

FAQs

1. How do we start building a scalable pipeline if we have massive legacy code? Start small. Don't try to automate everything at once. Focus on the most critical business paths and implement "Shift-Left" testing for all new features. Gradually migrate legacy tests to your new scalable framework as you refactor the code.

2. Which is more important: Parallel execution or Selective test execution? Both are vital. Parallel execution allows you to run large volumes of tests quickly, while Selective (Risk-Based) execution allows you to run fewer tests altogether. Ideally, you use Selective execution to narrow down the tests and Parallel execution to run them instantly.

3. How do we handle "Flaky" tests at an enterprise scale? Implement an "Auto-Quarantine" policy. If a test fails intermittently, it should be automatically removed from the critical pipeline and assigned to a developer for fixing. This prevents flaky tests from clogging the release for everyone else.

4. What is the role of Kubernetes in QA pipelines? Kubernetes is the engine for ephemerality. It allows you to spin up and tear down complex environments (including databases, APIs, and front-ends) in seconds, ensuring that every test run is isolated and repeatable.

5. How do we measure the ROI of a scalable QA pipeline? Track your Deployment Frequency and Lead Time for Changes. If a scalable pipeline allows you to deploy twice as often with the same number of people, the ROI is massive in terms of business agility and customer satisfaction.

6. Can a "low-code" approach work in a scalable enterprise pipeline? Yes, but only if the low-code tool is "Developer-Ready." This means it must integrate with CI/CD, support version control (Git), and have APIs that allow for advanced orchestration.

7. How do we manage test data in a distributed, global pipeline? Utilize Test Data as a Service (TDaaS). Instead of every test creating its own data, provide a centralized API that serves fresh, masked, or synthetic data to any test instance on demand, regardless of which region it's running in.