Big Data Testing Tools and Techniques

In the enterprise landscape of 2026, data represents arguably the single most valuable asset an organization possesses. Financial institutions analyze terabytes of transaction logs to detect micro-fraud in real-time. E-commerce giants process massive clickstream datasets to build hyper-personalized recommendation engines. Healthcare networks pool petabytes of anonymized clinical data to train predictive diagnostic models. However, this immense capability hinges entirely on the integrity of the underlying data pipelines. If the raw data is corrupted, slow, or maliciously manipulated, the algorithms relying on it will make catastrophic decisions at scale.

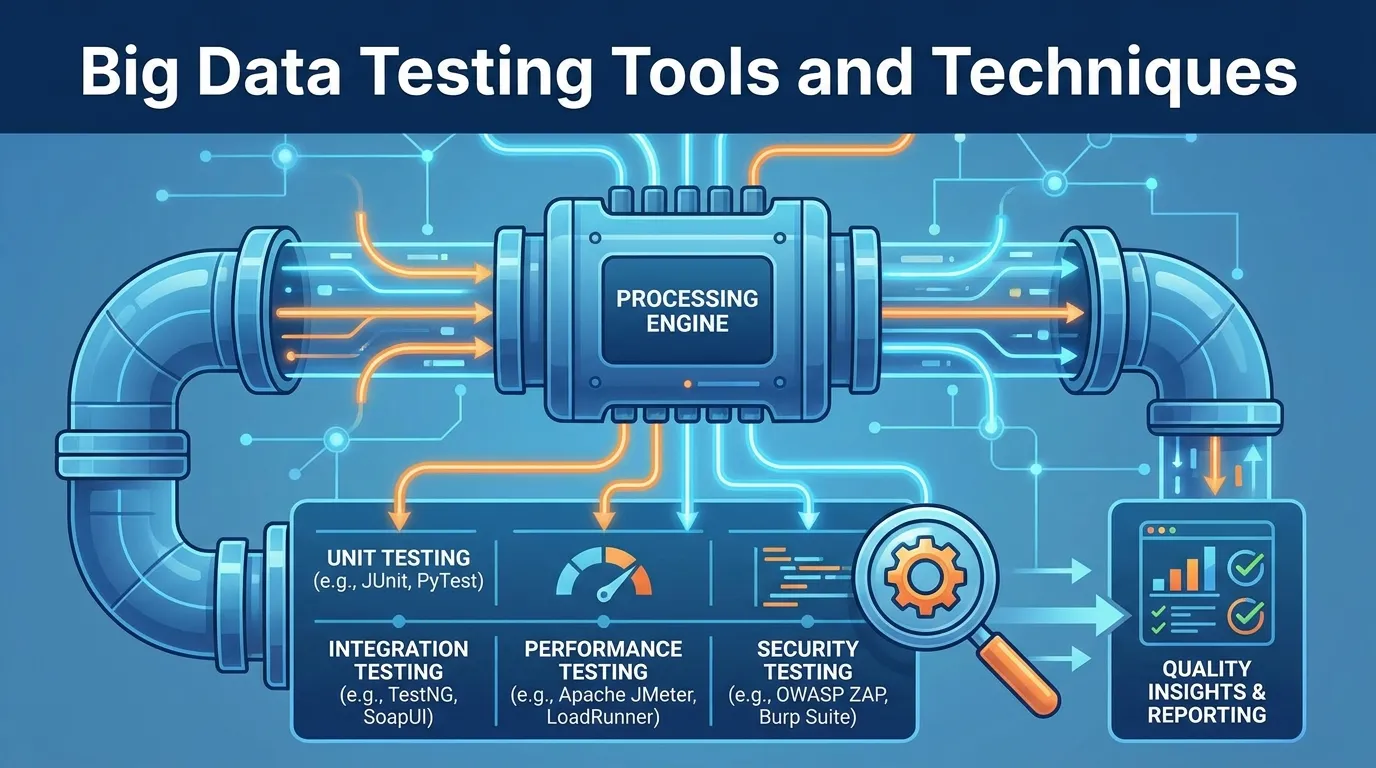

Traditional software testing focuses on whether an application functions—does the button click, does the API return a 200 OK status? Big Data testing completely shifts the paradigm. It focuses predominantly on whether massive volumes of complex data can be effectively ingested, transformed, stored, and analyzed without losing fidelity or choking the distributed architecture. In this comprehensive architect's guide, we will break down the strategies for validating complex ETL pipelines, managing robust synthetic data environments, and exploring the cutting-edge big data testing tools commanding the modern enterprise ecosystem.

The Shift from Application QA to Data QA

Understanding big data testing requires discarding the mental model of a simple web application querying a single SQL server. Big data environments are defined by the "V's": Volume (massive scale), Velocity (streaming speed), Variety (structured, semi-structured, and unstructured data), and Veracity (accuracy and trustworthiness).

Testing across this spectrum introduces unique challenges that standard QA automation frameworks (like Selenium or Cypress) cannot solve.

- The "Black Box" Problem: Traditional testing injects a specific input and asserts a specific output. In big data, the data often passes through a "black box" of complex machine-learning transformations or map-reduce aggregations where calculating the exact expected output manually is mathematically impossible.

- Lack of Local Replication: You cannot download a 50-petabyte data lake to a developer's laptop to run a local test suite. Tests must be executed directly against massive, distributed cloud architectures (like AWS S3 or Snowflake), meaning "testing" inherently involves managing heavy infrastructure and compute costs.

- Continuous Streaming: Modern data is not just "batched" overnight; it streams continuously via platforms like Apache Kafka. Testing must validate that these streams are completely fault-tolerant and sequence-accurate under massive network pressure.

Validating the ETL Pipeline (Extract, Transform, Load)

The backbone of any big data architecture is the ETL pipeline. ETL testing is arguably the most critical and time-consuming phase of data QA.

Phase 1: Extract (Data Ingestion Validation)

Data originates from countless disparate sources: messy CSV files, rigid relational databases, rapid API endpoints, and raw IoT sensor streams. The "Extract" test validates that data is pulled completely and safely into the staging environment.

- Data Completeness Checks: Did we extract 100 million rows, but only 90 million arrived in the staging lake? Testing must quickly isolate and identify exactly which records were dropped during the network transfer.

- Schema Validation: Does the incoming data match the expected schema? If an external API suddenly changes a "Date" format from

MM-DD-YYYYto a Unix timestamp, the ingestion test must immediately catch this anomaly before it corrupts downstream tables.

Phase 2: Transform (Business Logic Validation)

This is where raw data is cleaned, joined, aggressively scrubbed of PII (Personally Identifiable Information), and aggregated to meet business rules.

- Transformation Logic Checks: If the rule states "Calculate total revenue by region," testers must use automated SQL validation to ensure the aggregated tables perfectly match the expected business calculations.

- Data Quality Constraints: This involves testing for null values where none should exist, checking for duplicate primary keys, and ensuring string encodings (like UTF-8) survive the transition without generating "garbage" characters.

Phase 3: Load (Warehouse/Target Validation)

The final step moves the cleaned data into the target Data Warehouse (like Google BigQuery) or a structured Data Mart.

- Performance Monitoring: Does the load process complete within the designated maintenance window? If loading the daily partition suddenly takes 6 hours instead of 2 hours, the data engineering team has introduced an architectural regression.

- Data Integrity Verification: Running heavy, automated checksums to ensure that the data at rest in the warehouse matches the exact mathematical hash of the data that existed at the end of the Transformation phase.

Performance Testing in Distributed Clusters

Functional accuracy is not enough in big data. If a SQL query against a Spark cluster is perfectly accurate but takes 14 days to execute, the architecture has failed. Data volume is the ultimate stress test.

Testing Distributed Compute (Apache Spark)

Modern big data relies heavily on distributed computing frameworks like Apache Spark or Hadoop. Testing requires pushing these clusters to their limit to ensure the data is partitioned perfectly across the nodes without creating dangerous "Data Skew." If one server node in a 50-node cluster is assigned to process 90% of the data out of pure bad luck (Data Skew), the entire job will bottleneck and crash with an "Out of Memory" exception. Performance testing involves aggressively validating the underlying data partitioning logic against massive, heavily skewed datasets.

Validating Streaming Architectures

For real-time systems utilizing Apache Kafka or AWS Kinesis, testing shifts toward measuring pure latency and throughput. Teams construct automated load generators that bombard the event stream with millions of simulated "Clicks" per second, ensuring the downstream consumers can drain the queue without inducing a massive backlog or dropping vital events.

Test Data Management (TDM) and Synthetic Data

The greatest challenge facing a big data QA engineer is obtaining the data required to run the tests. You cannot use production data in a lower environment without running afoul of severe regulatory frameworks like GDPR or HIPAA.

The Problem with Masking

Historically, teams copied the production database and "Masked" it—replacing real names with fake names. However, masking massive 100-terabyte databases simply takes too long and costs too much in duplicate cloud storage fees to be practical for agile testing loops.

The Rise of Synthetic Data Generation

By 2026, the industry has fundamentally shifted to AI-driven Synthetic Data. Rather than masking real user records, modern big data testing tools use machine learning models that analyze the statistical distributions of the production database and then generate completely fake, 100% compliant datasets that mimic those exact statistical shapes. This allows QA to instantly spin up 5 billion rows of realistic but totally fake transaction data to stress-test a new data pipeline cluster safely and securely.

Top Tools for Big Data Validation in 2026

The tooling ecosystem for big data QA separates itself distinctively from traditional REST API testing platforms.

1. Great Expectations

This is the premier open-source tool for data validation. Data teams code specific logic rules (the "Expectations") directly into their Python data pipelines. For example, a developer can set an expectation that the "Age" column strictly never contains a negative number. If the data pipeline ingests corrupt data where a user is registered as "-5 years old," Great Expectations automatically halts the data pipeline and alerts the team before the bad data permanently pollutes the warehouse.

2. QuerySurge

QuerySurge is the enterprise heavyweight for automated ETL testing. It connects directly to massive, disparate data stores (e.g., pulling data from an Oracle database and an AWS S3 bucket simultaneously) and runs massive automated SQL queries to mathematically compare and validate that the data was transformed correctly across the migration. It is exceptionally useful for testing massive cloud migrations (e.g., moving on-premises databases into Snowflake).

3. lakeFS

Traditional software uses Git to manage code versions. Big data uses lakeFS to version the actual Data Lake. This allows a QA engineer to instantly create an isolated, zero-copy "Branch" of a 5-petabyte data lake. They can run destructive transformation tests on this branch safely; if it fails, they simply delete the branch without ever modifying or duplicating the underlying production data.

Step-by-Step: Testing a Cloud Data Lake Migration

The highest-risk operation in modern data engineering is migrating legacy on-premise data stores into massive cloud-native Data Lakes. This is the precise roadmap to test that migration.

Step 1: Pre-Migration Schema Validation

Before moving any data, utilize tools to compare the strict rigid schemas of the legacy relational databases with the "schema-on-read" configurations of the target Cloud Data Lake.

Step 2: Parallel Batch Execution

Do not switch off the old system instantly. Run the legacy on-premise pipeline and the new cloud pipeline perfectly in parallel.

Step 3: Automated Delta Comparisons

Utilize a tool like QuerySurge to run massive SQL comparisons across both targets. If the legacy system reports $1,000,000 in regional sales, and the new cloud Lake reports $999,998, the test fails. The data engineering team must troubleshoot that $2 discrepancy in transformation logic before the cutover.

Step 4: Scale and Performance Profiling

Once accuracy is verified, flood the new Cloud architecture with Synthetic Data to ensure the new compute clusters auto-scale fast enough to meet the business SLAs without driving up exponential cloud billing costs.

CI/CD Integration: DataOps

Testing cannot be an isolated phase in big data; it must be integrated into a "DataOps" CI/CD pipeline.

In 2026, when a data engineer writes a new transformation script (e.g., in Apache Airflow), that script is committed to a Git repository. This triggers an automated pipeline:

- Spin-up: The pipeline creates an ephemeral Spark cluster.

- Data Provisioning: lakeFS mounts a zero-copy branch of a massive test dataset.

- Execution: The new script runs the transformation against the test data.

- Validation: Great Expectations validates that the newly transformed data perfectly meets all business rules.

- Tear-down: If the test passes, the ephemeral cluster is destroyed, and the script is merged into production. If it fails, the developer receives an immediate alert without ever impacting the live Data Warehouse.

Summary

To master the complexity of scale, an enterprise big data QA strategy must focus heavily on automation and data management:

- Acknowledge the Shift: Testing big data is fundamentally about verifying mathematical transformations and architectural load, not UI functionality.

- Validate the Pipeline: Rigorously test the Extract, Transform, and Load (ETL) stages for both data completeness and logic accuracy.

- Test Distributed Performance: Push Apache Spark clusters with skewed workloads to identify hidden "Out of Memory" bottlenecks.

- Utilize Synthetic Data: Stop masking production data. Generate perfectly modeled, GDPR-compliant synthetic data for agile testing loops.

- Embrace DataOps: Automate validation checks directly into the continuous integration pipeline using tools like Great Expectations and lakeFS.

Conclusion

Data is only valuable if it is accurate and available. The cost of bad data entering a machine-learning model or a financial report is astronomical. Organizations can no longer rely on manual spot-checks or simple SQL row counts to validate massive data migrations or complex streaming events. By adopting rigorous data engineering mindsets, investing in AI-driven synthetic data management, and integrating cutting-edge big data testing tools into automated DataOps pipelines, enterprises can guarantee the Veracity of their data lakes at the absolute limits of scale.

FAQs

1. Does big data testing replace traditional functional testing? No, it precedes it. If you are building a dashboard application, big data QA tests the massive queries powering the dashboard, while traditional functional QA tests if the UI charts render correctly on the screen.

2. Why is "Data Skew" such a big problem in testing? Distributed computing relies on splitting data evenly across 100 servers so they finish the work at the exact same time. Data skew means the splitting logic is flawed. One server does 99% of the work while 99 servers do nothing. Testing must aggressively hunt for the logic flaws that cause skew.

3. What is the difference between Hadoop and Spark testing? Hadoop (specifically MapReduce) traditionally writes data to the physical disk between every processing step, making it highly reliable but slow. Spark processes data directly in RAM (in-memory), making it 100x faster but highly susceptible to "Out of Memory" crashes. Spark testing focuses much more heavily on memory profiling.

4. How do we test real-time streaming data like Kafka? You test streaming by measuring "Consumer Lag"—the difference between when an event was placed onto the queue and when it was processed by the target application. If the lag continuously grows during a performance test, your consumers are entirely bottlenecked.

5. Is synthetic data actually useful for testing complex ML models? Yes, highly useful. Advanced generative AI can create synthetic datasets that retain the exact multi-dimensional correlations and "edge cases" of the original data, allowing models to be tested heavily without exposing real customer names or emails.

6. Who owns Big Data Testing? In 2026, it is owned jointly. Data Engineers write the actual pipeline code, but specialized Data Quality Assurance Engineers (SDETs with heavy Python/SQL skills) design the automated validation frameworks (like Great Expectations) that monitor the pipelines.

7. Can we test big data locally on our laptops? Usually, no. While you can test a tiny "sample" of the ETL script logic locally using something like PySpark in a Docker container, you can never validate performance and data skew without deploying the code to a proper cloud cluster mimicking production scale.