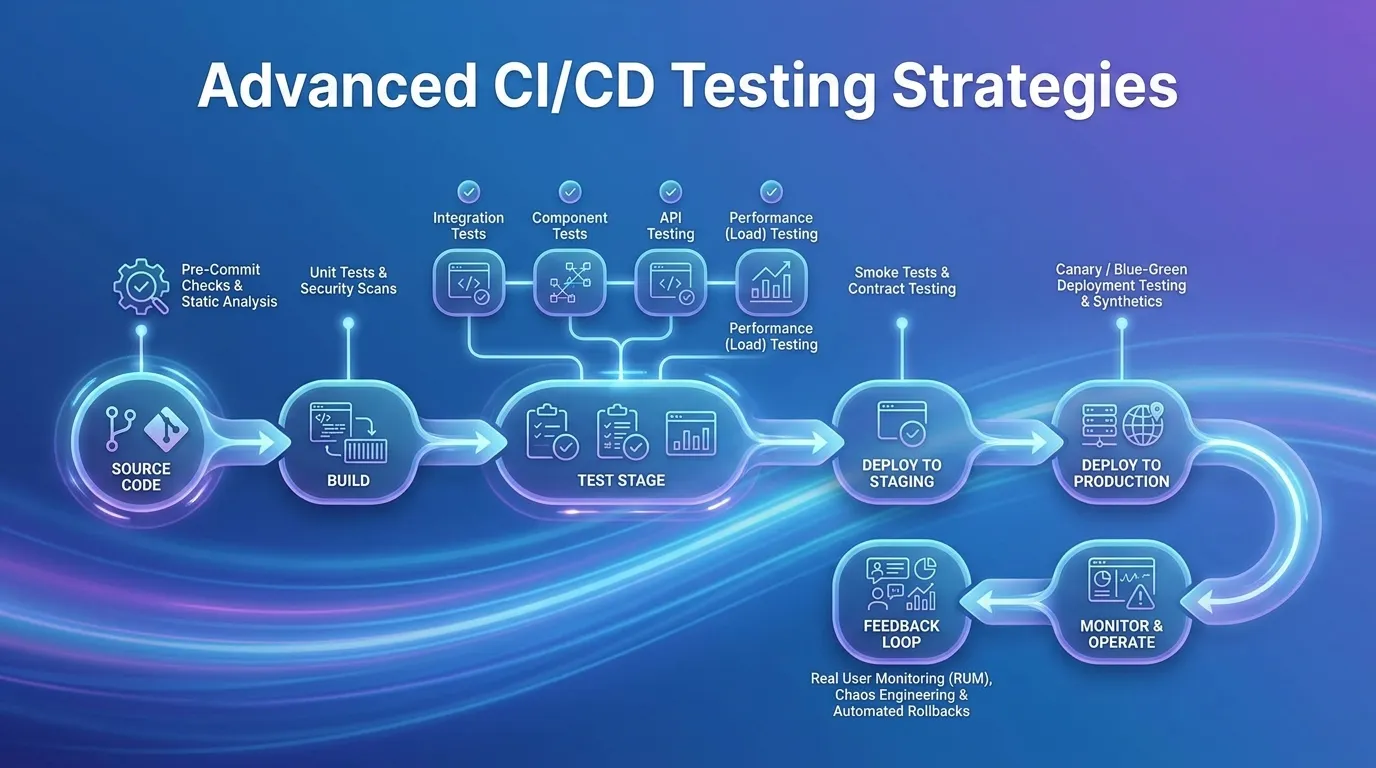

Advanced CI/CD Testing Strategies

The implementation of Continuous Integration and Continuous Deployment (CI/CD) fundamentally altered the cadence of software engineering. The promise was alluring: write code, commit it, and have it automatically built and deployed to production within minutes. However, as enterprise organizations scaled their DevOps practices, they encountered a rigid bottleneck. A CI/CD pipeline is merely a sophisticated conveyance mechanism; if the testing apparatus inside that pipeline is slow, brittle, or inherently flawed, the pipeline simply accelerates the delivery of broken software.

To achieve genuine continuous delivery without sacrificing stability, organizations must evolve past basic unit testing and primitive functional scripts. The modern enterprise requires advanced CI/CD testing strategies. These methodologies blend machine learning, concurrent execution, intelligent artifact management, and deep orchestration to create test suites that validate complex distributed microservices in mere seconds. In this guide, we will analyze the methodologies required to transform an archaic, blocking test phase into a hyper-efficient, non-deterministic quality gate.

The Flaws of Legacy Pipeline Testing

Before implementing advanced architectures, it is vital to understand why basic testing strategies collapse under enterprise workloads.

The "All-or-Nothing" Antipattern

In rudimentary pipelines, every commit triggers the entire test suite. If a developer alters a single line of CSS on the front-end login page, the CI/CD pipeline mindlessly executes 15,000 backend database integration tests before allowing the merge. This results in hour-long pipeline execution times, destroying developer velocity and causing costly context switching.

The Menace of Flaky Tests

As test suites grow, non-deterministic (flaky) tests proliferate. These are tests that pass and fail intermittently without any underlying code changes, usually due to asynchronous timing issues or unstable network conditions. When developers lose faith in the CI/CD test results, they begin manually overriding the quality gates, defeating the purpose of automated integration.

Paradigm 1: Test Impact Analysis (TIA)

The most transformative of all advanced CI/CD testing strategies is Test Impact Analysis. TIA effectively answers a singular critical question: Which specific tests actually need to run right now?

The Mechanics of TIA

TIA utilizes static code analysis and execution tracing. During a baseline run, the TIA engine maps exactly which lines of source code are executed by which specific automated test. When a developer pushes a new commit, the TIA engine inspects the exact files and lines that were modified. It cross-references this diff against the execution map and dynamically orchestrates a pipeline run that only executes the 15 tests relevant to the 10 modified lines of code, completely bypassing the remaining 14,985 irrelevant tests.

The Business Value

By mathematically proving which tests correlate to which code changes, TIA can reduce a 45-minute monolithic test suite down to a 3-minute targeted execution cycle. This ensures developers receive near-instantaneous feedback without compromising the integrity of the quality gate. Platforms like SeaLights and Launchable (using machine learning) are pioneering this space.

Paradigm 2: Ephemeral Environments and Shift-Left Infrastructure

You cannot run advanced integration tests without a stable environment. Hardcoded, shared staging servers are fundamentally incompatible with modern CI/CD scale.

The Shared Staging Bottleneck

If twenty developers merge code into the main branch simultaneously, and all tests run against a single "Staging DB," data collisions are inevitable. One test might insert a specific user record while another test simultaneously deletes it, causing endless flaky test failures.

Ephemeral Environments as Code

Modern testing strategies rely on massive parallelization powered by Kubernetes. Every time a Pull Request (PR) is opened, the CI/CD pipeline utilizes Infrastructure as Code (IaC) to spin up an entirely isolated, temporary "Ephemeral Environment."

- The tests execute in total isolation against this brand-new, sterile micro-architecture.

- Upon test completion—pass or fail—the temporary environment is immediately destroyed. This guarantees environmental parity and perfectly reproducible integration testing at maximum concurrency.

Paradigm 3: Chaos Engineering in the Pipeline

Traditional testing checks if the application functions when everything is perfect. Advanced CI/CD testing strategies verify how the application behaves when the underlying infrastructure catastrophically fails.

Implementing Continuous Chaos

Chaos Engineering is usually reserved for manual "game days" against production. Advanced organizations, however, build chaos directly into their CI/CD release pipelines. Tools like Gremlin or Chaos Mesh are integrated into the pipeline to intentionally inject severe faults during the staging test phase.

- Latency Injection: While an End-to-End browser test is running, the pipeline artificially injects 500ms of latency into the payment processing microservice. The test ensures the front-end correctly renders a "Processing..." spinner rather than experiencing a hard timeout crash.

- Pod Termination: The pipeline deliberately kills a critical Kubernetes pod mid-test to ensure the load balancer instantly shifts traffic to healthy nodes without dropping user sessions.

Paradigm 4: Service Virtualization and Contract Testing

Testing microservices presents a unique dependency nightmare. If Service A relies on Service B, and Service B relies on a third-party legacy mainframe, how does the team building Service A run their isolated pipeline?

The Death of End-to-End (E2E) Testing

Relying heavily on massive UI-driven E2E tests to validate microservices is an anti-pattern. E2E tests are slow, notoriously brittle, and difficult to maintain. The solution is shifting down the testing pyramid through Service Virtualization and Consumer-Driven Contract Testing.

Consumer-Driven Contracts (Pact)

Instead of spinning up every microservice to see if they communicate correctly, engineering teams must formulate explicit "Contracts." By utilizing tools like Pact, the "Consumer" API defines exactly what JSON payload it expects to receive. During the CI/CD run of the "Provider" API, the pipeline runs a lightweight test solely to verify that the Provider's output perfectly matches the pre-agreed Consumer Contract. This completely eliminates the need for heavy, integrated E2E environments while explicitly preventing breaking changes.

Implementing the Strategies: A Phased Approach

Re-architecting an entire delivery pipeline must be handled incrementally to avoid disrupting business continuity.

Phase 1: Quarantine Flaky Tests

Do not attempt advanced orchestration until you trust your base code. Implement strict thresholds. If an automated test fails and passes on an immediate retry, the CI tool must automatically move that test to a "Quarantine" suite. Quarantined tests are reported but do not block the pipeline. Engineering must dedicate time to fixing or deleting quarantined tests.

Phase 2: Parallelization and Containerization

Break up your massive monolithic test suite. Refactor tests to be completely atomic (they do not rely on the state of previous tests). Once atomic, orchestrate your CI runner to execute 50 testing containers in parallel, radically reducing total execution time.

Phase 3: Introduce Contract Testing

Identify the most brittle integration paths between your microservices. Replace the flaky UI-level tests with lightweight, lightning-fast Consumer-Driven Contract tests executed at the API layer.

Phase 4: Machine Learning and TIA

Finally, integrate intelligence. Deploy Test Impact Analysis tools to analyze Github diffs dynamically, ensuring the pipeline executes only a mathematical subset of tests necessary for verification.

Summary

To scale software delivery, testing within pipelines requires a distinct architectural upgrade:

- Abandon the Monolithic Run: Stop executing the entire test suite on every minor commit.

- Adopt Test Impact Analysis: Utilize machine learning to map code changes to specific tests, drastically accelerating feedback loops.

- Destroy Shared Environments: Shift toward Kubernetes-based Ephemeral Environments to ensure clean data state and prevent test collisions.

- Inject Pipeline Chaos: Introduce intentional latency and infrastructure failures during staging strictly to test error handling and resilience.

- Prioritize Contract Testing: Shift away from bloated UI E2E testing in favor of rapid API contract verification to validate microservice interactions securely.

- Quarantine Ruthlessly: Automatically disable flaky tests before they force developers to bypass pipeline security gates.

Conclusion

The CI/CD pipeline is the central nervous system of any modern technology organization. However, its speed is directly dictated by the intelligence of its testing mechanisms. Throwing more servers at a slow, brittle testing suite is a losing battle. By evolving past basic automation and aggressively implementing advanced CI/CD testing strategies—ranging from mathematical test reduction via TIA to consumer-driven contract architecture—enterprises can achieve the ultimate goal of DevOps: the ability to deploy robust, heavily validated code dozens of times a day without fear of catastrophic regression.

FAQs

1. What is the difference between Continuous Integration and Continuous Delivery? Continuous Integration (CI) is the automated process of merging developer code, compiling it, and running foundational tests. Continuous Delivery (CD) takes that validated codebase and guarantees it is in a deployable state, automatically pushing it to staging and preparing it for a one-click release to production.

2. Why are End-to-End (E2E) tests discouraged in CI/CD? They are not entirely discouraged; they are just overused. E2E tests simulating real user browsers are slow to run and highly sensitive to minor UI changes. An advanced strategy utilizes thousands of fast unit/contract tests, and only a tiny handful of critical E2E tests to validate the final pipeline payload.

3. What defines a "Flaky Test"? A flaky test yields varying results (pass/fail) when run multiple times against the exact same codebase. They are incredibly destructive because they force developers to lose trust in the CI/CD pipeline's true validation capabilities.

4. How does Test Impact Analysis (TIA) know which tests to run? Advanced TIA tools use code instrumentation to track which lines of source code are accessed during a test's execution. It builds a matrix. When a code commit creates a diff, TIA cross-references the diff against the matrix to select only the highly relevant overlapping tests.

5. What is Service Virtualization in pipeline testing? Service Virtualization involves deploying a lightweight mock version of a heavy dependency (like an external payment gateway) directly inside the CI runner. This allows the pipeline to test integrations without requiring network calls to actual, slow exterior production services.

6. Can Chaos Engineering cause pipeline failures? Yes, that is the literal intent. Injecting chaos into a staging pipeline ensures that the application's fallback procedures (like circuit breakers and custom error pages) successfully mitigate the disaster before the code is granted access to the production environment.

7. How do Shift-Left principles apply to CI/CD? Shifting left in CI/CD involves moving complex validations—like infrastructure security scanning or heavy integration tests—away from the final staging deployment, pushing them earlier into the developer's local IDE or the very first phase of the pull request validation.

8. Is Contract Testing meant to replace API Testing? No. API testing validates the internal logic and functionality of an endpoint (e.g., "Does this calculate tax correctly?"). Contract testing only validates communication structure (e.g., "Does this endpoint send the payload using the exact JSON keys the other team expects?").

9. How do we test database migrations inside a pipeline? Testing database migrations requires spinning up an ephemeral clone of the production database structure. The pipeline executes the automated schema migration against the clone, runs the application tests to ensure data integrity, and immediately destroys the clone upon validation.